Table of Contents

Convolutional Neural Networks

Deep Convolutional Neural Networks (CNNs) re-entered into the computer vision community recently, especially after the breakthrough paper of Krizhevsky et al. [1] that presented a large scale image category recognition with a remarkable success. In 2012, the CNN-based algorithm outperformed competing teams from many renowned institutions by a significant margin. This success initiated an enormous interest in neural networks in computer vision, to the extent that most successful methods are using neural networks nowadays.

The convolutional network is an extremely flexible classifier that is capable of fitting on very complex recognition/regression problems with a good generalization ability. The network consists of a nested ensemble of non-linear functions. The network is usually deep, i.e. it has many layers. Typically it has more parameters than number of data samples in the training set. There are mechanism to prevent overfitting. One of the basic tricks is leveraging the convolutional layers. The network learns shift-invariant filters instead of individual weights on every input pixel. Thus much fewer parameters are required, since the weights are shared.

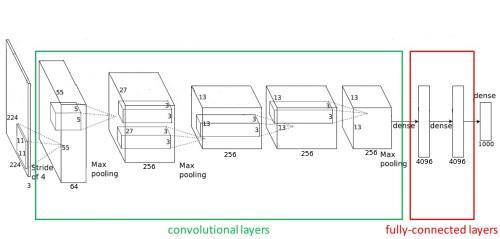

Fig. 1: Architecture of a Deep Convolutional Neural Network. Figure adapted from [1].

Fig. 1: Architecture of a Deep Convolutional Neural Network. Figure adapted from [1].

Usually, the architecture of an image classification CNN is composed of several convolutional layers (which are meant to learn a representation) followed by a few fully connected layers (which implement the non-linear classification stage on top of the invariant representation), see figure 1.

In the following two labs, you will get in touch with the CNNs. In the first part, you will train your own network for image classification from scratch. We will be using pytorch library for that.

1. Training CNN for image classification

In this lab we will train convolution neural network for image classification from scratch. It is typically done on GPUs, often multiple ones, as the process is very computationally intensive. The current assignment, on the other hand, is created to be run on a CPU: a single training epoch takes 1-5 minutes on CPU, depending on CNN architecture and hardware.

Specifically, it takes 90 sec for training epoch on mobile i7 CPU and 26 sec on mobile GT940M GPU.

You will implement a CNN, training and validation loop, custom dataset loader and a couple of helper functions.

Download and installation

First, make sure that you have conda virtual environment set-up. If not, create one from here https://gitlab.fel.cvut.cz/mishkdmy/mpv-python-assignment-templates/-/tree/master/conda_env_yaml

Second, pull the assignment template from here https://gitlab.fel.cvut.cz/mishkdmy/mpv-python-assignment-templates/-/blob/master/assignment_6_7_cnn_template/training-imagenette-CNN.ipynb.

You can download the data via resp. section of the notebook or directly from https://s3.amazonaws.com/fast-ai-imageclas/imagenette2-160.tgz

The task

For this lab all explanations are contained in the corresponding notebook. Please, refer there.

Introduction into PyTorch Image Processing.

To fulfil this assignment, you need to submit these files (all packed in one .zip file) into the upload system:

results.html- Converted notebook. Transform your notebooktraining-imagenette-CNN.ipynbinto file results.html usingjupyter nbconvert -–to html training-imagenette-CNN.ipynb -–EmbedImagesPreprocessor.resize=small -–output results.htmlsubmission.csvFile with predictions on the test set.cnn_training.py- file with the following methods implemented:get_dataset_statistics- function to calculate dataset mean and standard deviation (per pixel)SimpleCNN, - class, which implements CNNweight_init- function, which initializes CNNvalidate, - function for performing a validationtrain_and_val_single_epoch, - function for training CNN on single pass through train data loaderlr_find, - function for run a small training for finding optimal learning rate for training *TestFolderDataset– Class, which reads images in folder and serves as test datasetget_predictions– Function, which predicts class indexes for image in data loader

Use template of the assignment. When preparing a zip file for the upload system, do not include any directories, the files have to be in the zip file root. Please upload the same zip to the two tasks: 01_cnnclf and 02_tourn.

Your code and notebook will be checked manually. submission.csv will be used for two things. First, for the evaluation of quality of your trained network (task 08_cnnclf). Second, you will get bonus points based on its performance: 1 point for being in 20%-quantile. E.g. top-20% will bring you 5 points, top40%: 4 points and so on. In order to get any points your classification accuracy should be >70%.

2. Image colorization with Unet

The task

For this lab all explanations are contained in the corresponding notebook. Please, refer there.

Important: gitlab does not show all images. Download notebook and check it locally.

To fulfil this assignment, you need to submit these files (all packed in one .zip file) into the upload system:

results.html- Converted notebook. Transform your notebooktraining-colorization-Unet.ipynbinto file results.html usingjupyter nbconvert -–to html training-colorization-Unet.ipynb -–EmbedImagesPreprocessor.resize=small -–output results.htmlcolorization.py- file with the following methods implemented:UnetFromPretrained, - class, which implements Unet from pretrained networkContentLoss- class, which implements “content” or “perceptual” loss

model.pt- Weights of your saved model

Use template of the assignment. When preparing a zip file for the upload system, do not include any directories, the files have to be in the zip file root. Task: 10_color.

Your code and notebook will be checked manually.

References

- A. Krizhevsky, I. Sutskever, G. E. Hinton. ImageNet Classification with Deep Convolutional Neural Networks. In NIPS, 2012. PDF

- Y. LeCun, L. Bottou, Y. Bengio and P. Haffner: Gradient-Based Learning Applied to Document Recognition, Proceedings of the IEEE, 86(11):2278-2324, November 1998. PDF

Jan Čech 2016/04/26 17:07