Table of Contents

Tutorial 3 - reinforcement learning III.

Problem 1 - Passive reinforcement learning

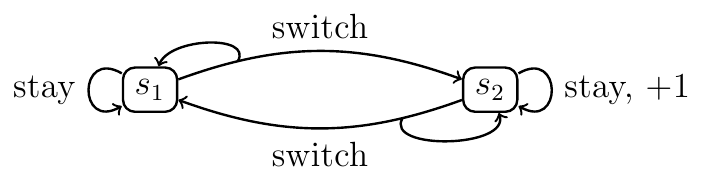

Consider the following MDP. Assume that reward is in the form $r(s,a)$, i.e., $r: S \times A \mapsto \mathbb{R}$. Set $\gamma = \frac{1}{2}$.

Suppose that you have seen the following sequence of states, actions, and rewards:

$$

s_1, \mathrm{switch},

s_2, \mathrm{stay}, +1,

s_2, \mathrm{stay}, +1,

s_2, \mathrm{switch},

s_1, \mathrm{stay},

s_1, \mathrm{switch},

s_1, \mathrm{switch},

s_1, \mathrm{stay},

s_1, \mathrm{switch},

s_2, \mathrm{stay}, +1,

{\color{lightgray} s_{\color{lightgray} 2}}

$$

Suppose that you have seen the following sequence of states, actions, and rewards:

$$

s_1, \mathrm{switch},

s_2, \mathrm{stay}, +1,

s_2, \mathrm{stay}, +1,

s_2, \mathrm{switch},

s_1, \mathrm{stay},

s_1, \mathrm{switch},

s_1, \mathrm{switch},

s_1, \mathrm{stay},

s_1, \mathrm{switch},

s_2, \mathrm{stay}, +1,

{\color{lightgray} s_{\color{lightgray} 2}}

$$

- What is $\hat{U}^{\pi}(s_i)$ calculated by the Every-visit Monte-Carlo policy evaluation algorithm (in AIMA called Direct Utility Estimation algorithm)?

- How would You estimate the transition model $\hat{\mathcal{P}}$ so that the policy can be evaluated by the Policy iteration algorithm? (This approach is sometimes called Adaptive Dynamic Programming algorithm.)

- What are state values estimated by a Temporal Difference learning agent after two steps? Assume that $\alpha=0.1$ and all values are initialized to zero.

Problem 2 - Greedy policy and value function

Decide whether the following statement is true or false: If a policy $\pi$ is greedy with respect to its own value function $U^{\pi}$, then this policy is an optimal policy.

Problem 3 - GLIE and convergence

Do the following exploration/exploitation schemes fulfill the 'infinite exploration' and 'greedy in limit' conditions? Which lead to convergence of $Q$-values in $Q$-learning and which lead to convergence of $Q$-values in SARSA. Does anything change if we are interested in the convergence of policy? $N_{s,a}$ denotes the number of times when action $a$ was taken in state $s$. $N_s$ is defined similarly.

- a random policy

- $$ \pi(s) = \begin{cases} a, & \mbox{if } N_{s,a} \leq 100, \\ \mathop{\mathrm{arg\, max}}_a Q(s,a), & \mbox{otherwise}.\end{cases} $$

- $\varepsilon$-greedy policy with $\varepsilon = \frac{1}{N_s^2}$

- $\varepsilon$-greedy policy with $\varepsilon = \frac{1,000}{999+N_s}$

- $\varepsilon$-greedy policy with $\varepsilon = \frac{1}{\sqrt{N_s}}$

Problem 4 - $Q$-learning for graph search

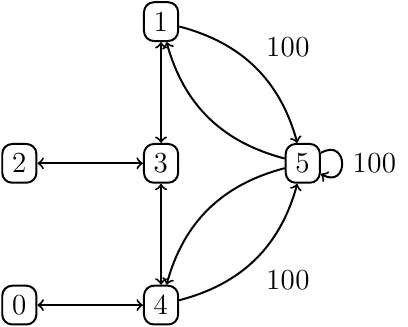

Consider the following MDP with $\gamma=0.8$, $r(5) = 100$, $r(\cdot) = 0$.

The initial matrix of $Q$-values is

$$

Q(s,a) = \begin{bmatrix}

- & - & - & - & 0 & - \\

- & - & - & 0 & - & 0 \\

- & - & - & 0 & - & - \\

- & 0 & 0 & - & 0 & - \\

0 & - & - & 0 & - & 0 \\

- & 0 & - & - & 0 & 0

\end{bmatrix}.

$$

Consider path $1-5-1-3$ and constant learning rate $\alpha = 0.1$. Show changes in $Q$ values after the agent-environment interaction.

The initial matrix of $Q$-values is

$$

Q(s,a) = \begin{bmatrix}

- & - & - & - & 0 & - \\

- & - & - & 0 & - & 0 \\

- & - & - & 0 & - & - \\

- & 0 & 0 & - & 0 & - \\

0 & - & - & 0 & - & 0 \\

- & 0 & - & - & 0 & 0

\end{bmatrix}.

$$

Consider path $1-5-1-3$ and constant learning rate $\alpha = 0.1$. Show changes in $Q$ values after the agent-environment interaction.

References

Problems 1,2, and 4 were adapted from Richard Sutton's 609 course. You may find the related materials on http://www.incompleteideas.net/book/the-book-2nd.html.