Table of Contents

HW2: Catch me if you Kajinek

Famous czech murderer has escaped the prison and is running away in the crowded environment. Our agency has sent drones mapping the area from top-down view. You are connected to the drones remotely but, the drones are not prepared to detect specific person. Fortunately, you have a image of last time you saw Kajinek. He was wearing his favorite vest and he is known to not put that away. There is a good chance he can be spotted that way.

Primary objective

Estimate the cells indices in the image, which correspond with the location of the murderer. Keep in mind, that we need the original resolution to precisely localize the target. Be sure that your output match the input resolution and the location is matching in both.

Secondary (Optional) objective

Fire a homing missile to destroy the target. You can use the optimization procedure from the last project (HW1) to navigate the trajectory of the missile to the detected position, i.e. the synthesis of vision and control. Should you choose to accept this task, you will be rewarded with 3 bonus points, since now we can fire the guy operating the missiles.

How to proceed

- Use all information (one image) to learn the robust detector (one convolution) on the annotated position from the past image of Kajinek.

- Design the convolution written in the code template and estimate the maximum probability of Kajinek in cells of the image.

- Our scientists suspect that if trained correctly, you will observe the pattern in the learned weights.

- Learned weights and filled code template will be uploaded to our brutal drone system (BRUTE).

We have prepared the operation point with the data and code for further progress.

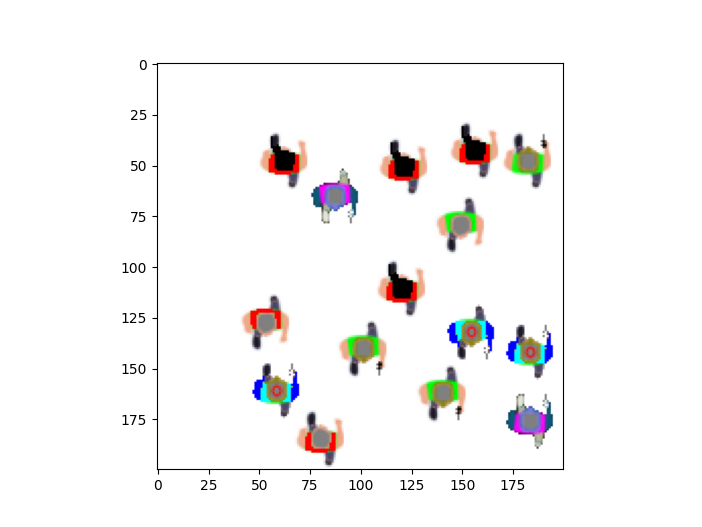

Last known position is observable bellow, in the crowded market (left), where our human operator point out the location of the true green vest (encoded 1 in green point in image). Access to the top secret image and label is attached in the operation point. You can load the data via np.load function and transform the axes to right dimensions.

Our drone system will accept your code hw2_template and import the module called “Conv2D” with function set_weights and forward, as you can see in the template. The input shape into your model is (3,200,200) as (RGB, Height, Width), the output of the conv class is (Height, Width) without the channel. The weights will be loaded from your uploaded files and installed via the set_weights function. The execution of the system runs following commands:

# Initialization Sequence # from hw2_template import Conv2D weights_path = f'hw2_weights.pt' # needs to be in the same root as hw2_template learned_weights = torch.load(weights_path) conv = Conv2D(3, kernel_size=learned_weights[0,:,:].shape) # Weight tensor as Channels x Height x Width conv.set_weights(weights=learned_weights) # Execution Sequence per image # logits = conv.forward(x_test) kajinek_position = np.argwhere(logits == logits.max()) true_position = torch.tensor(np.argwhere(label_test == 1.)).T L2_result = torch.sqrt(((kajinek_position - true_position) ** 2).sum()) if L2_result < 8: print("Target Destroyed!") score = 13 else: print("Target Missed! Innocent people are dying!")

- Your Conv2D class has the right naming of arguments in function (def set_weights(weights=your_learned_weights)).

- Weight tensor is stored as three-dimensional tensor (channels = 3, kernel_size in dim 1, kernel_size in dim 2), you can use code above to evaluate that.

- Do not forget that output shape must be the same as input shape. If you learn and code your convolution properly, you do not have to care about this.

- If the learned weights works on training image and are not hard-coded, it should work on the test as well.

Good luck, we are counting on you! Any problems should be reported to vacekpa2@fel.cvut.cz