Table of Contents

Reinforcement Learning (4th assignment)

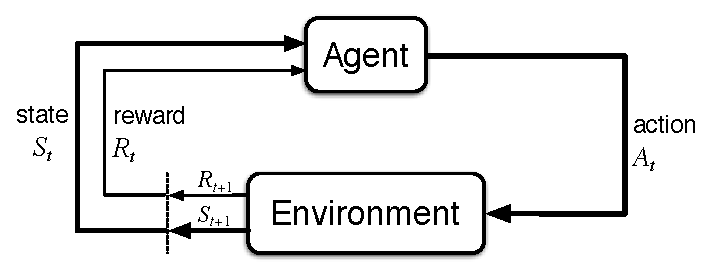

Reinforcement Learning

Implement the learn_policy (env) method in a rl_agent.py file that you will upload to Brute. env is of type HardMaze this time. The expected output is policy, dictionary keyed states, values can be [0,1,2,3] which corresponds to up, right, down, left (N, E, S, W). The learning limit on one tile is 20 seconds. Be sure to turn off visualizations before submitting, see VERBOSITY in rl_sandbox.py.

Again, we will use the cubic environment. Download the updated kuimaze.zip package. Visualization methods are the same, as well as initialization, but the basic idea of working with the environment is different. We do not have a map and we can explore the environment using the main method env.step (action). The environment-simulator knows what the current state is. We are looking for the best way from start to finish. We want a trip with the highest expected sum of discounted rewards.

obv, reward, done, _ = env.step(action) state = obv[0:2]

You can get the action by random selection:

action = env.action_space.sample()

The package includes rl_sandbox.py, where you can see basic random browsing, possibly initializing the Q values table, visualization, and so on.

More examples on AI-Gym. Let us recall from the lecture that action is necessary to learn about the environment/

Grading and deadlines

The task submission deadline can be seen in the Upload system.

The grading is divided as follows:

- Automatic evaluation tests your agent's performance on 5 environments. With the policy you supplied for a given agent environment, we release n-times and calculate the average sum of the rewards it earns. This is then compared to a teacher's solution (an optimizing agent). You earn one point on each of the 5 environments in which you have 80% or more than the teaching value of the sum of rewards.

- Manual ranking is based on code evaluation (clean code).

| Evaluation | min | max | note |

|---|---|---|---|

| Rl algorithm quality | 0 | 5 | Evaluation of algorithm by automatic evaluation system. |

| Code quality | 0 | 1 | Comments, structure, elegence, code cleanliness, appropriate naming of variables, … |

Code Quality (1 points):

- Appropriate comments, or the code is understandable enough to not need comments

- Reasonably long or short methods / functions

- Variable names (nouns) and functions (verbs) help readability and comprehensibility

- Code pieces do not repeat (no copy-paste)

- Reasonable memory saving and processor time

- Consistent names and code layout throughout the file (separating words in the same way, etc.)

- Clear code structure (e.g. avoid assigning many variables in one line)

You can follow PEP8, although we do not check all PEP8 demands. Most of the IDEs (certainly PyCharm) point out mishaps with regards to PEP8. You can also read some other sources for inspiration about clean code (e.g., here) or about idiomatic python (e.g., medium, python.net).