Table of Contents

HW 2 - Camera Calibration

Goals

- Download source code package and implement missing parts in module

intrinsics.py(see below) - Get familiar with pinhole camera model, nonlinear distortion model and respective calibration routines from OpenCV

- Calibrate camera intrinsic parameters

- Implement function

intrinsics.calibrate

- Using functions

cv2.findChessboardCornerscv2.cornerSubPix(limit search window sizewinSizeto avoid excessive corrections)cv2.calibrateCamera(use the rational model without tangential distorion from the lecture:flags=cv2.CALIB_RATIONAL_MODEL + cv2.CALIB_ZERO_TANGENT_DIST)

- Convert camera intrinsics parameters (camera matrix and field of view)

- Implement functions

intrinsics.create_camera_matrixintrinsics.camera_field_of_view

- Undistort images using the intrinsic parameters found and new pinhole camera parameters

- Implement function

intrinsics.remap

- Using

cv2.initUndistortRectifyMapcv2.remap(use bilinear interpolation)

Module Usage

python multimodal_dataset.py --help python intrinsics.py --helpPrint out command-line parameters of the modules.

python multimodal_dataset.py download python multimodal_dataset.py calibrateDownload the multimodal dataset [1] and calibrate intrinsic parameters of its cameras (calls

intrinsics.calibrate).

The calibration is saved into the following JSON files:

data/multimodal_ir_intrinsics.jsondata/multimodal_left_intrinsics.jsondata/multimodal_right_intrinsics.json

python intrinsics.py calibrate intrinsics.json --pattern COLS ROWS --unit UNIT -- IMAGE [IMAGE ...] python intrinsics.py calibrate intrinsics.json --pattern COLS ROWS --unit X_UNIT Y_UNIT Z_UNIT -- IMAGE [IMAGE ...] python intrinsics.py calibrate data/multimodal_left_intrinsics.json --pattern 8 9 --unit 0.061 0.047 0.0 -- data/calibration_sequence_I/*Left.ppm python intrinsics.py calibrate data/multimodal_right_intrinsics.json --pattern 8 9 --unit 0.061 0.047 0.0 -- data/calibration_sequence_I/*Right.ppmCalibrate camera using list of files and parameters of the calibration pattern (calls

intrinsics.calibrate).

The calibration is saved to the specified file. The last two commands partially reproduce the calibration done in

python multimodal_dataset.py calibrate (excl. the infra-red camera).

python intrinsics.py remap intrinsics.json --fov FOV --size ROWS COLS IMAGE [IMAGE ...] python intrinsics.py remap data/multimodal_ir_intrinsics.json --fov 40 --size 426 534 data/calibration_sequence_I/*IR1_crop.bmpUndistort (i.e., removes radial distortion from) images using the calibration (calls

intrinsics.remap and intrinsics.create_camera_matrix).

The images are re-projected into new pinhole camera with given parameters (field of view and image size).

python intrinsics.py remap intrinsics.json --alpha ALPHA IMAGE [IMAGE ...] python intrinsics.py remap data/multimodal_left_intrinsics.json --alpha 0 data/calibration_sequence_I/*Left.ppm python intrinsics.py remap data/multimodal_left_intrinsics.json --alpha 1 data/calibration_sequence_I/*Left.ppmUndistort images using the calibration (calls

intrinsics.remap and intrinsics.camera_field_of_view).

Instead of specifying the parameters manually, an optimal camera parameters are estimated to ensure all pixels are valid (--alpha 0) or no information is lost (--alpha 1). Any value in-between can also be used. Expected Results

Expected re-projection errors (the 1st output of cv2.calibrateCamera) for the multimodal dataset are approximatelly:

- Left camera: 0.37 px (0.67 px without sub-pixel refinement via

cv2.cornerSubPix) - Right camera: 0.33 px (0.68 px)

- IR camera: 0.78 px (1.27 px)

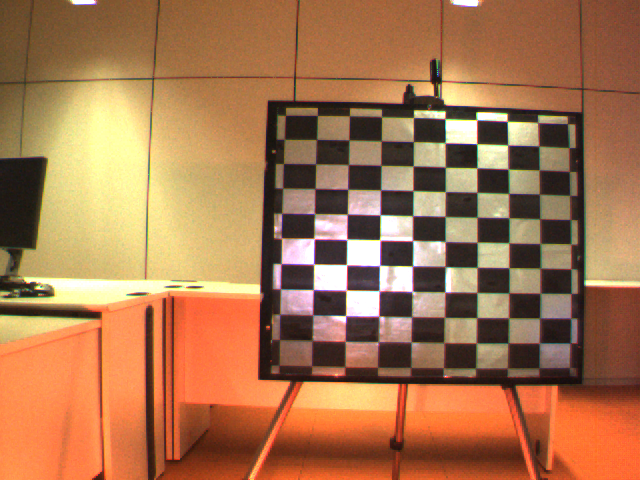

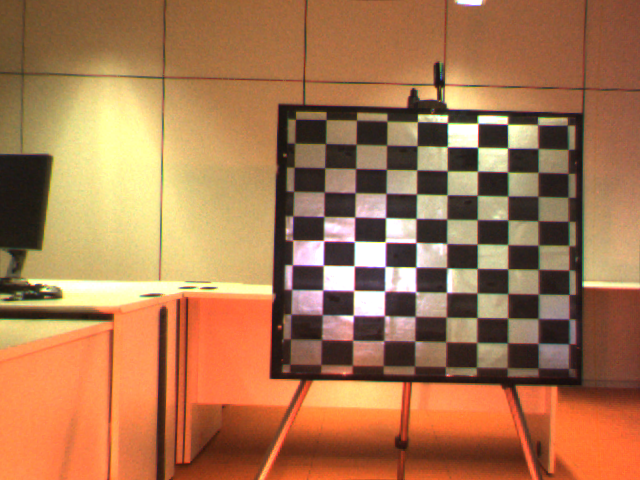

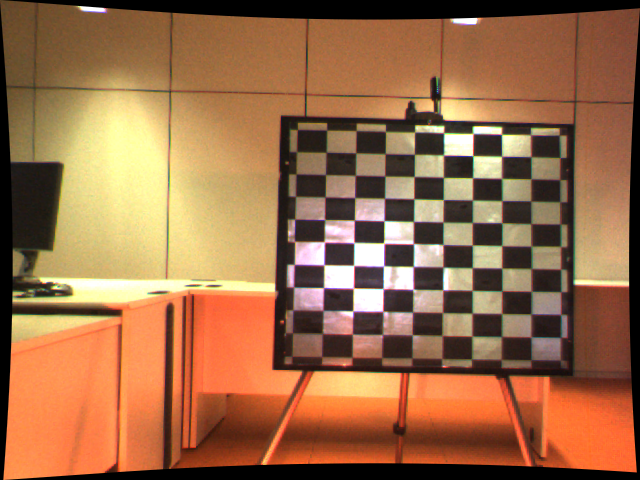

Original and undistorted images from the left camera should look similarly to these:

References

[1] Barrera F., Lumbreras F., Sappa A. Multimodal Stereo Vision System: 3D Data Extraction and Algorithm Evaluation. In IEEE Journal of Selected Topics in Signal Processing, Vol. 6, No. 5, September 2012, pp. 437–446.